What is Cisco ACI?

Intro

In this lesson we’ll briefly go over what is Cisco ACI, the components that make it all work and some of the differences between ACI and non-ACI data center networks. Don’t worry in later lessons we’ll cover these topics in more detail.

Application Centric Infrastructure

ACI stands for Application Centric Infrastructure. It’s Cisco’s solution for Software Defined Network (SDN) designed for data centers. What does that even mean? Think about what happens when you want to make a change in a traditional networking environment. Let me give you a very simple example.

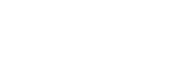

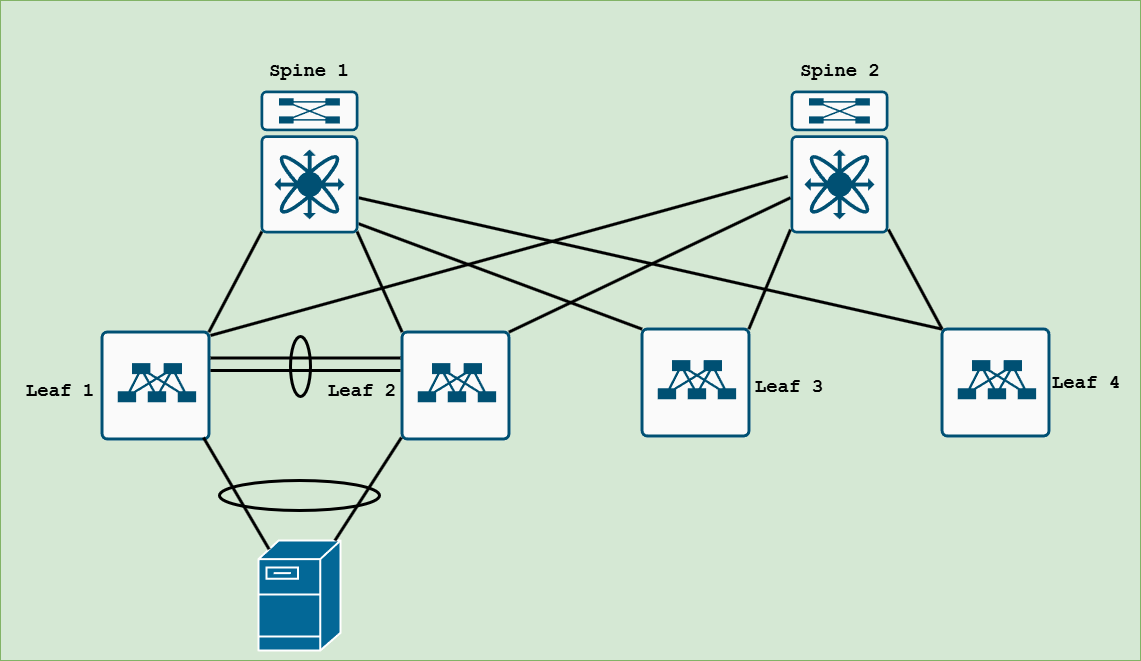

In the above image shows a small spine and leaf architecture commonly deployed in data centers. Let’s say you need to make a change. Like updating an NTP server or disabling CDP on multiple network switches and routers to address a vulnerability. You would need to first identify all of the devices that need the change, then manually configure the change on each device. It’s a time consuming process and prone to errors if you type the incorrect command or copy and paste the wrong thing and suddenly things start to break.

With Cisco ACI and most SDN solutions in general, we can make the change one time by creating a policy in the SDN environment and it will push the configuration change one time to the devices that need it. This saves you a ton of time and helps minimize mistakes.

ACI Components

ACI is made up of 3 main components.

- Nexus 9000 spine switches in ACI mode

- Nexus 9000 leaf switches in ACI mode

- Application Policy Infrastructure Controller (APIC)

The Cisco Nexus 9000 switches have to be dedicated to ACI mode. When you download images from Cisco’s website you have the option to download traditional NX-OS images or the ACI specific image. You then convert the switch to the mode you want, either NX-OS or ACI mode. You can change modes anytime you want as long as the image is on the switch.

Nexus 9000 Series Switches:

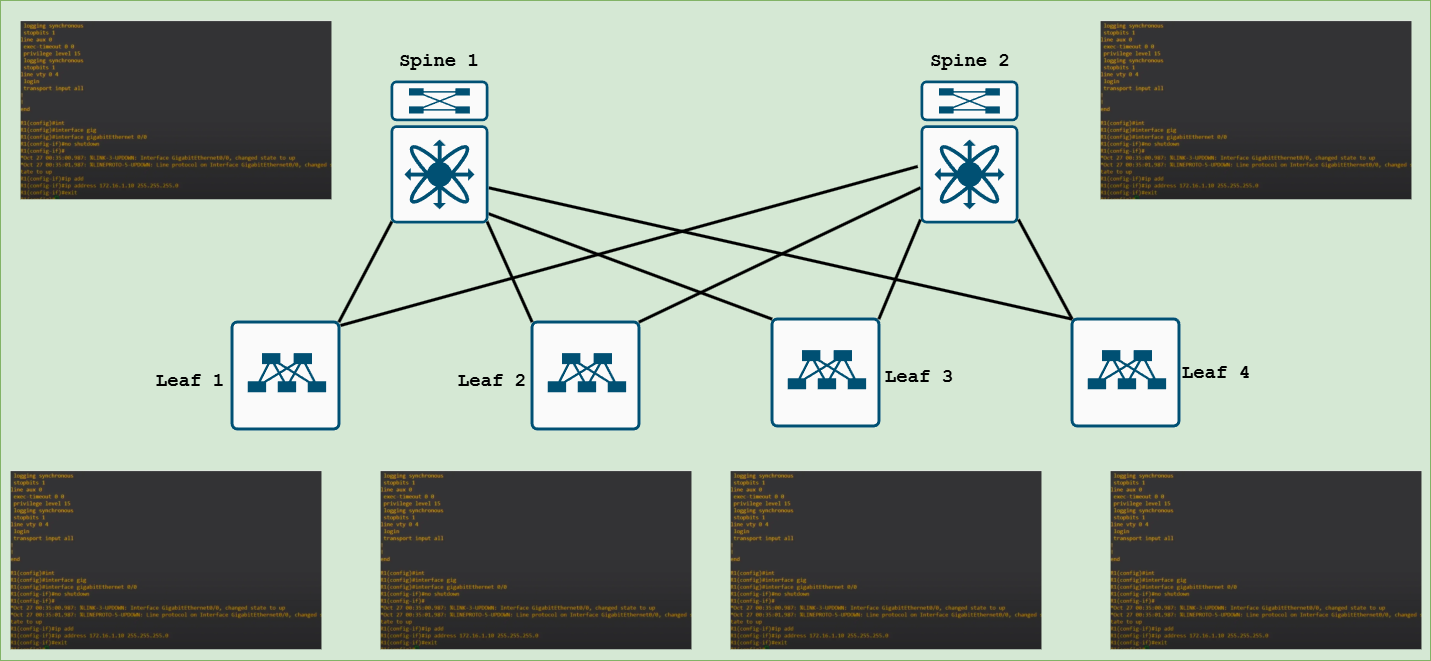

The physical switches are cabled in a CLOS architecture also referred to as a spine and leaf topology. Think of leaf switches like top of rack switches that endpoints like servers would connect to. The leaf switches then get physically connected to each spine. You can think of the spines as an aggregation or collapsed core layer. There is no leaf to leaf or spine to spine connectivity.

Cisco APIC:

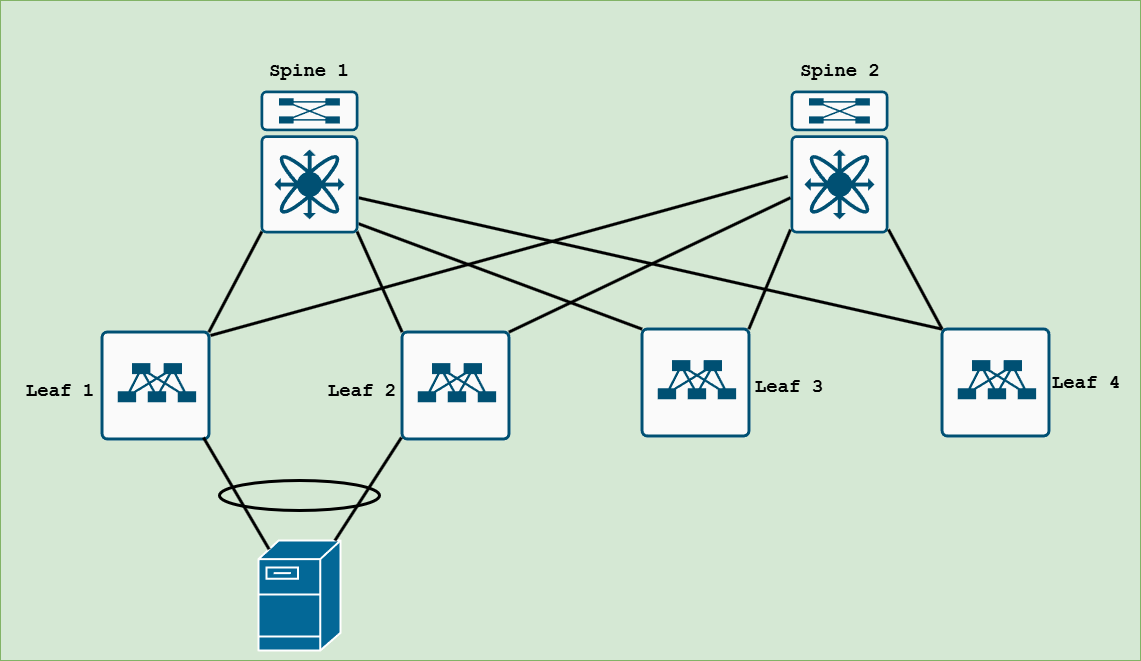

The APICs, which manage the entire ACI fabric are commonly deployed in a cluster of 3 and are dual connected to a leaf pair for redundancy, then the leafs connect to the spines, similar to the image below.

Think of APICs like the management interface of the ACI fabric. In Cisco ACI you don’t need to manage the Nexus 9000 spine and leaf switches separately. You manage the entire system from one central location, the APIC GUI. The APICs can be on a physical UCS C220 server or a virtual machine hosted in your virtual environement. You need an odd number of APICs. Cisco recommends 3, 5, or 7 APICs per fabric and if you want to use VM’s then at least one physical APIC is required.

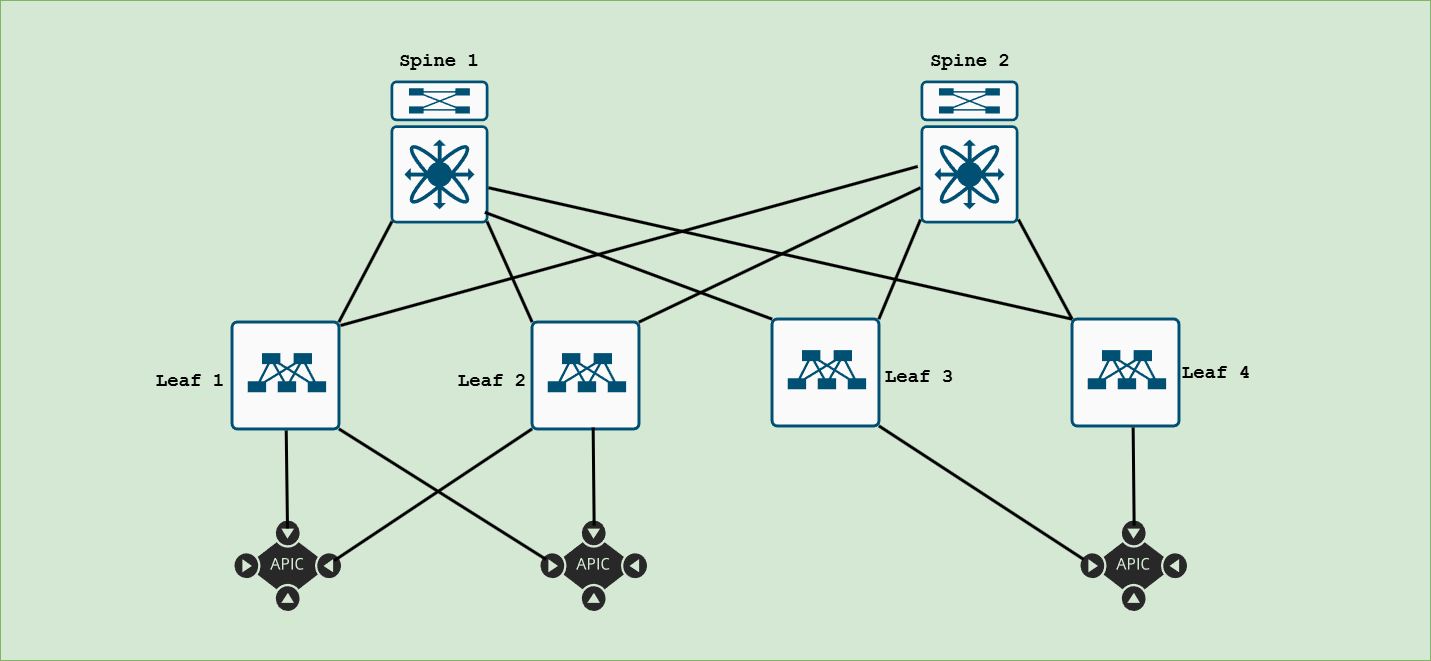

Obviously the APICs are important, you manage and configure your entire ACI fabric with them. What happens if all of the APICs lose connectivity to the network, or you lose the ability to access the APICs?

Nothing. That’s right, the ACI fabric will continue to handle traffic according to the most recent policy set by the APIC. You just can’t make any configuration changes. The APIC’s don’t affect data-plane traffic.

VXLAN in ACI

Virtual Extensible LAN (VXLAN) is a great overlay solution in the data center. It allows you extend or stretch a layer 2 network over a completely Layer 3 infrastructure. That means no more spanning VLANs across your entire network. You don’t have to worry about spanning-tree blocking redundant links, you don’t even need spanning-tree anymore. You get to have redunant layer 3 links between spine and leaf switches that route using equal cost multi-pathing (ECMP). But VXLAN has it has it’s drawbacks. Having a non-ACI data center using VXLAN can become a pain to manage as the network grows especially with managing and keeping track of VTEPs and VNID’s. ACI and VXLAN go hand in hand. You don’t ever have to configure VXLAN yourself in Cisco ACI, it’s all done for you behind the scenes.

Virtual Port-channels (vPC) in ACI

It’s common to see in a traditional Cisco non-ACI data center environment, servers are dual homed to a pair of Nexus switches that are set up in a Virtual Port-Channel (vPC). Two switches in a VPC make up a VPC domain. VPC requires a dedicated peer-link using one or more links between the two switches like the image below. Take a look at leaf 1 and leaf 2.

In Cisco ACI you can have a VPC between two switches but there’s no dedicated peer link. The peer link connection goes through the spine connection. In the below image, Leaf 1 and Leaf 2 are in a VPC domain but the connectivity for the peer link goes through the spines. No dedicated peer link required between leaf 1 and 2.

One of the differences between Cisco ACI and a non-ACI data center with Nexus switches is that with ACI you can add a leaf or spine switch to the network and once it’s discovered, the fabric connections between spine and leaf are automatically configured with IP addressing, routing, ect. Traditionally you would have to configure IP addressing and routing protocols for all of the ports, per device one by one. Cisco ACI saves you time by doing this automatically on the backend. Initial provisioning of the ACI fabric is designed to be plug and play.